Markerless Computer Vision Tracking from SketchAR is the most powerful CV-tracking solution for surfaces.

Computer vision is a complex sphere based on an ongoing process of research and development. Here are some reasons why and how we’ve solved one of the crucial problems of computer vision — the fear of a flat surface.

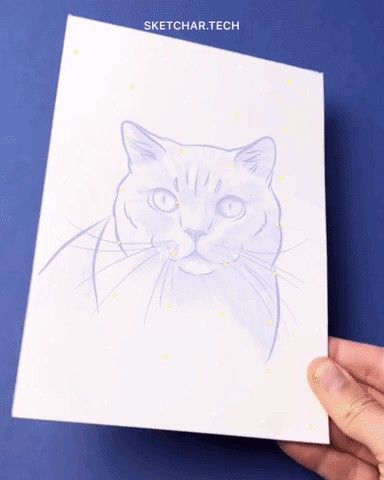

SketchAR is concerned with the flat surfaces seen through a smartphone camera when looking at a white piece of paper or wall. To understand this environment, we had to invent methods and algorithms that allowed us to work with all surfaces. We’ve already mentioned in an article how Markerless Tracking works. We want to share with you all use-cases and possibilities of its potential, and what kind of fields it could be used for.

SketchAR Tracking Method Without Markers is able to detect flat surfaces. Moreover, it remains steady even in extreme conditions, such as the camera not seeing the environment clearly or when zoomed in and only looking at a white surface.

SketchAR vs. ARKit/ARCore

Here we compare SketchAR Tracking with the most popular solutions from Apple and Google — and ARKit/ARCore. We used the same conditions on the same device: extreme zoom, and moving the paper.

White on white

Another feature of SketchAR worth pointing out is showing how we can detect the same or similar surfaces through the color, such as a white piece of paper on a white table.

Here are a few features we would like to point out:

- How easily a camera can recognize such a complex surface like a white piece of paper on a white table.

- How stable a virtual image is when the camera is not seeing the environment clearly, when zoomed in and only looking at a white surface.

- How far a piece of paper can go outside of the cameras view. Here you can see only 25% of paper the camera sees, but the virtual image is very stable in such conditions.

- How a virtual image is ‘glued’ when moving a surface with different angles by a hand.

Outdoor

One more good example of how Markerless tracking is successfully working in different conditions is SketchAR’s testing on the street on a wall. We found a piece of a wall that looks like a canvas. Then chose a sketch from the app library and placed it on the wall, and moved as close as possible as we could get to the wall — 10cm from the surface. The virtual image was stable enough, even if moving the smartphone left or right.

Sometimes a white wall can be 100% white, and the camera cannot find a focus to works correctly with. We already have a solution for this, and going to update of kernel soon.

How it works.

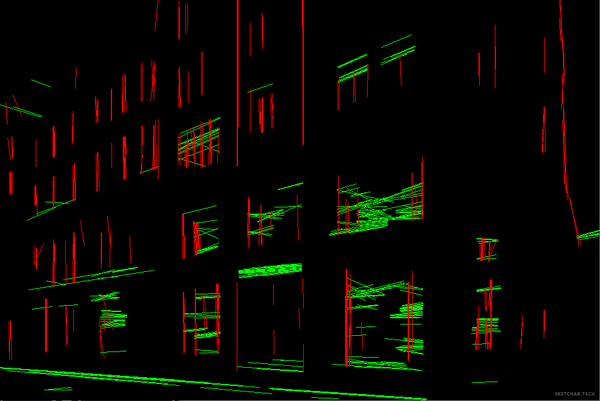

We already mentioned how we use machine learning and neural networks to analyze tons of data from our users for the successful working of the app. Here are things we analyze:

- Surface(types, structure, etc.).

- Paper (shapes, dimensions).

- Additional objects — to separate them from the surface being ‘noise’ for the camera.

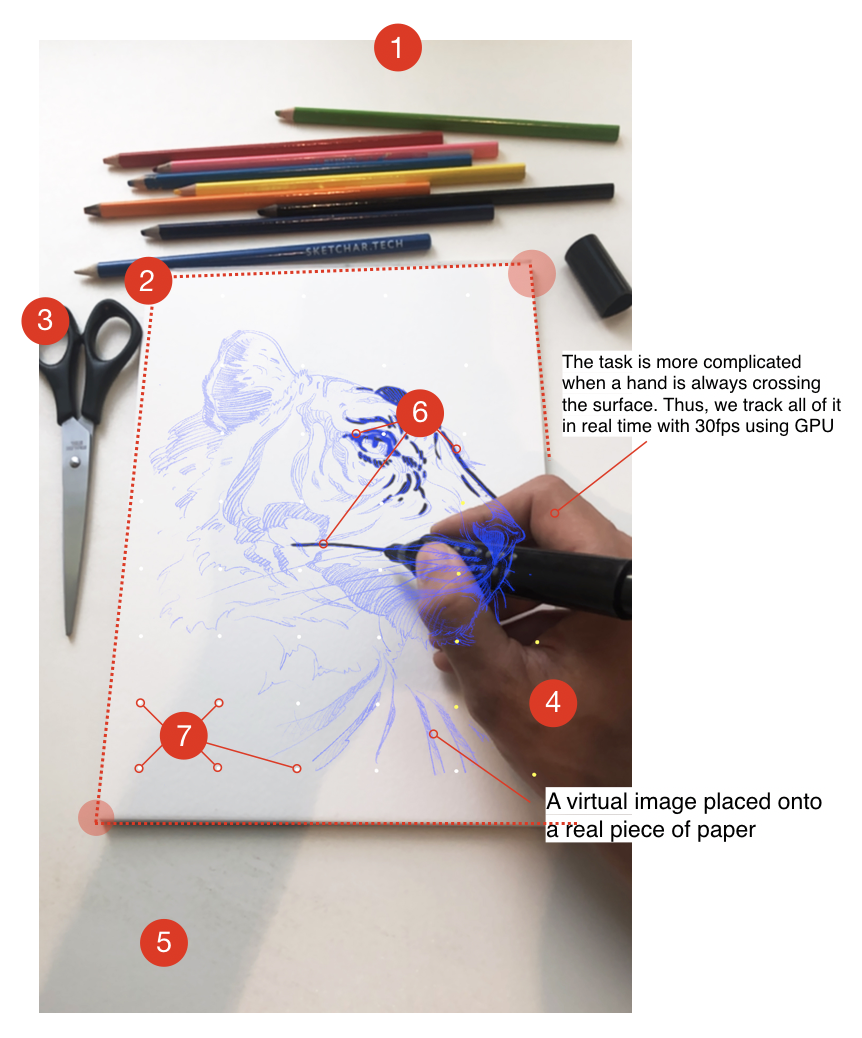

- Hands are detected using convolutional NN.

- Shadows, because it is always breaking cv-tracking.

- Drawings from the user to match those inconsistencies with the original image.

- Surface(a paper) structure recognition based on Matrix of Transformation(ML+NN). The camera of a smartphone can recognize many tones of similar white(or any other flat color).

- Points in the grid are all connected in realtime. The re-initialization occurs, and the points in the grid return to their original position. When a hand leaves the frame or moves to another square, the desired pixel of their place is re-recognized.

This approach allows us to collect all data from real SketchAR users (100K users ~ 80% of them enable us to do so). Our kernel analyses this data as connections and relationships between the anchor points and then corrects the position of the virtual object relative to the real surface without lagging, in realtime 30 fps using GPU.

Our world consists of surfaces. Our goal is to teach machines understand the environment much better and nteract with the world more precisely.

Learn more about the product here.

Cheers,

SketchAR Team